A Crash Course on Quality Assurance: Take a Fresh Look at Your Call Center Quality Assurance Program

Managing the quality of your contact center is foundational to your job description. You want your customers to leave every interaction happy with their outcome. And you want them to share their good experience with their friends. You want to cut down the time your customers wait to get a problem resolved. Even better, you want agents who come to work ready and passionate to help people. These are the reasons you build all those processes, track those key performance indicators and choose the right tools.

Download Now: Use these 101 questions to vet contact center vendors in your next RFP.

Setting and sharing clear expectations, then measuring agent performance is foundational to a successful call center quality assurance program in your contact center. Bringing clarity to goals and expectations gives you a way to evaluate your agents, so they know exactly how they’re measured (and why it matters).

To keep up with the expectations of your customers and manage new hires, your call center quality assurance program needs to progress beyond cherry-picking interactions to review and filling out a few agent scorecards.

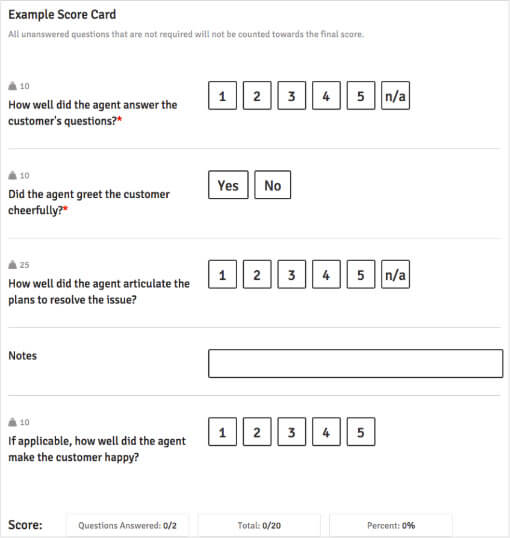

And, when you do use scorecards to grade your agents, choose criteria that matters to your customers, not just your processes. When a scorecard is too heavily weighted in internal processes, you’ll see a disparity in QA and your CSAT scores. That doesn’t give you a clear picture of what your customers really want.

So, how do you manage and track your contact center’s quality in a way that’s mutually beneficial to you, your agents and your customers? Let’s take some steps back and re-evaluate how to build and improve your QA program.

Defining Quality for Your Contact Center

The word quality is ambiguous. Quality means different things to different people. And it means something different in every industry. Quality in a health care center or in a restaurant looks different from what’s expected in a contact center. So, before jumping into specifics about your QA program, define what quality means to you and your contact center agents.

Then, detail the objectives you want to achieve through your QA program. These objectives will be used to evaluate your agents and how you’re tracking towards your contact center goals, so get specific.

Your call center quality assurance program should evaluate quality on behalf of your customers, your company and your employees. So, define your version of quality with all three categories in mind. It’s helpful to involve each group of people in the process, so you can get more feedback on what matters most.

Discuss quality metrics and coaching methods with key stakeholders and company leaders. Involve agents in calibration sessions, so they can craft standards that are realistic and motivating to them. And, pay attention to your customers’ expectations to see what they want. Did you get five similar complaints about wait times on your CSAT survey? Then, add hold times to your quality scorecard or your coaching and training plan.

Determine Your Criteria for Quality

Evaluate quality from the perspective of your contact center and from the perspective of your customers.

Determine what quality looks like for each of your channels. What makes a phone call successful is different than what makes an email successful. If an agent spends 30 minutes on an email, they’d better have some Hemingway-level storytelling to show for it. Whereas if an agent spends 30 minutes on a phone call, they could’ve been rehashing past issues, training customers or building a conversational relationship.

Choose KPIs that fit your channels and are important to the level of service you want to deliver. How will you measure customer satisfaction? Will you pay attention to Average Handle Time? Or First Contact Resolution? What metrics tell you the most about your customers’ overall happiness and loyalty?

You may think keeping AHT low is important to the customer, but in reality, sometimes a hasty phone call can lead to a dissatisfied customer whose problem gets magnified through the interaction. Take time to uncover what’s important to your customers. You’ll need to do a little detective work and analyze call transcriptions, recordings and customer surveys.

Use your contact center platform to review reports and interactions for actionable data.

Pull the recordings and transcripts for 10 interactions with high CSAT scores. Review those interactions and try to uncover what positively influenced the sky-high customer rating. Next, pull 10 interactions with low CSAT scores, and do the same. But this time, try to figure out what negatively influenced the rating.

Compare the two lists and determine what matters most to your customers. For example, if both high and low CSAT interactions have low AHT, then AHT isn’t a major factor impacting your quality. But, if low AHT occurs more in calls with high CSAT, it may be an indicator that your customers equate fast interactions with good service. With a little research, you can narrow down what your program’s call center quality assurance scorecard will look like.

Building a Scorecard and Coaching on What You Find

With your standards defined and your measurements selected, prioritize your version of quality by building out a scorecard for your agents. When your agents have a set of guidelines to follow, customer happiness increases.

With clear criteria and a dose of empowerment, your customers can say almost anything, and your agents will still know how to deliver top-notch service. Assure your agents that if they work toward a good scorecard, they don’t need to feel anxious about the outcome. And, using graded criteria makes it easier for you to pinpoint where agents need more coaching and training to see success.

Once you’ve pinpointed where agents need to improve, you can step beyond simple, surface-level feedback. To kick your QA program up a notch, use in-line training to leave comments and contextual feedback on your agents’ interactions. Flag their transcripts or call recordings with feedback at the exact moment where they need to improve. And, share positive encouragement at moments in the interaction where they nailed it. The best tools support conversational coaching, too, letting agents ask questions or bring clarity how they handled an interaction.

Testing Your Scorecard

Create a collective sense of responsibility for success in your contact center. Rope agents in to test scorecards and get their feedback on the metrics you include. Do agents feel the quality metrics you picked are directly actionable by them? And, do they agree that they are the key metrics to impact customer happiness? Including your agents in the process promotes buy-in for your QA program and boosts confidence in your agents’ ability to do their jobs.

Testing is crucial because while your scorecard may seem brilliantly constructed, criteria might miss the mark when you apply it. Do your calculations add up? Do the KPIs make sense for your team? Testing lets you find the missing spices in case key ingredients get lost during preparation.

Enlist all your team members to help, so you have multiple viewpoints to shape the final product. Listen to your agents’ input on the functionality and accuracy of the scorecard. Ensure that every agent understands how they’re being scored and what criteria to meet.

And, walk them through the coaching and training process that will follow. For instance, if agents get below 80% on their scorecard, they should expect in-line feedback to review. And, if they get below 60%, they’ll have to press pause on interactions and complete a microlearning lesson you shipped to their queue. Then, after corrective action, they can hop back in to help customers.

What’s more? Refine and adapt your QA program as you go. While you coach your agents through scorecards, you might notice new trends in customer expectations or see metrics that aren’t helping outcomes. As your company changes and customer expectations shift, the definitions of high-quality service in your contact center will move — as will your QA program.

Choose a contact center vendor with the QA tools you need to deliver outstanding service with every interaction. Get 101 questions to vet vendors in your next RFP.

We originally published this post on February 16, 2018, and we updated it for new insight on May 21, 2020.